Neuro Rook 0.3: First Desktop UI and Tests as AI Guardrails

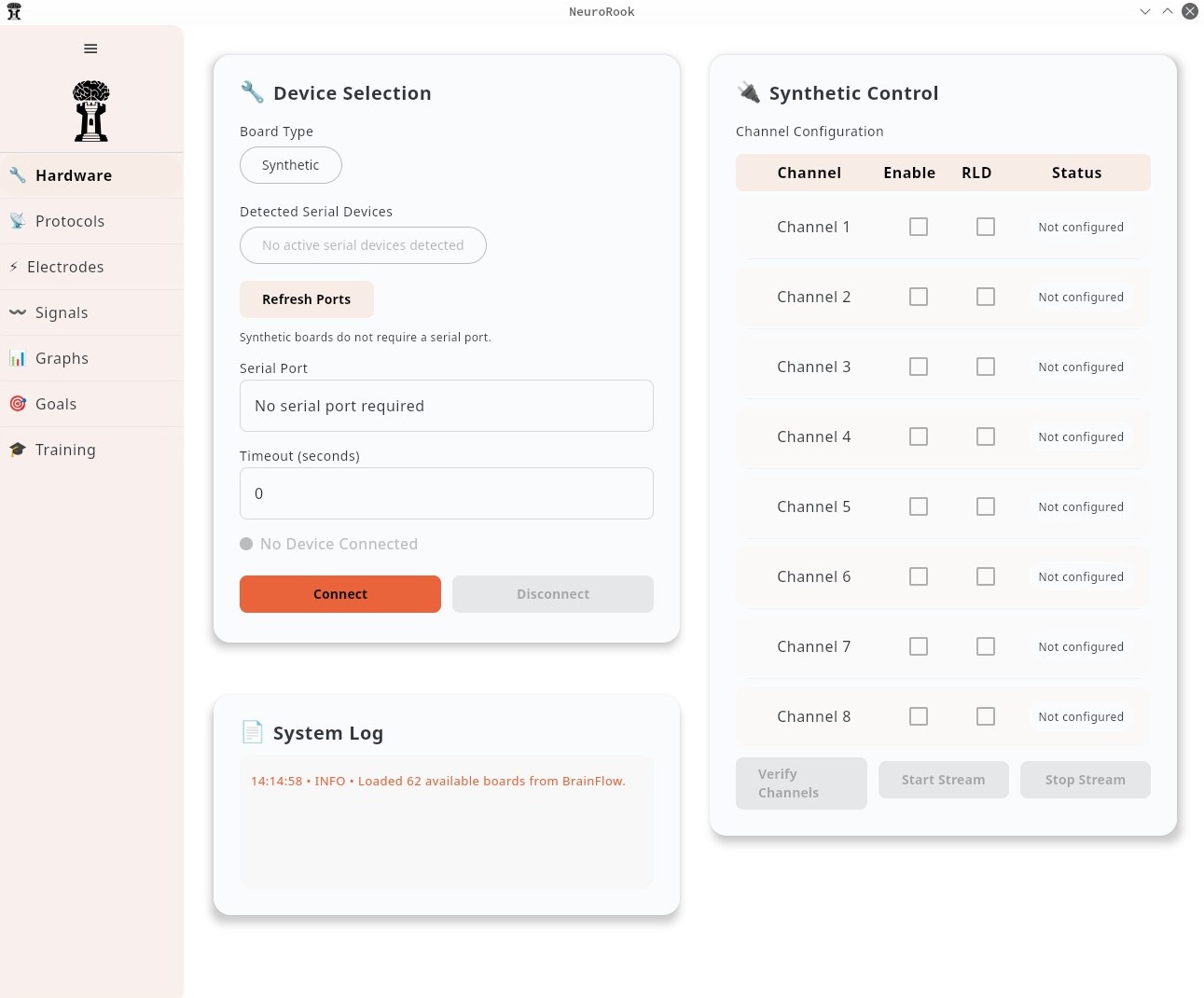

This release of the Neuro Rook neuroscience biofeedback suite is a first step toward a real graphical interface on top of the stack we have been building. Below is a screenshot of the desktop app: hardware configuration, synthetic board mode, channel table, and a small system log (including BrainFlow loading available boards).

I am not a front-end developer, so the workflow that clicked for me started in Figma: the product has an AI-assisted mode where you steer the layout through a chat-style prompt. I have only used it on the free tier, but it is enough to turn a rough description into screens, spacing, and component structure without spending hours pushing rectangles by hand. For now, Figma’s codegen path targets React, not Jetpack Compose. That is acceptable: the React output is a clear intermediate representation, and agents can translate it into our Kotlin and Compose without it being a bottleneck.

This release also puts more weight on automated tests—unit, integration, and system—both for the usual reasons and because they work well as guardrails for AI-assisted changes. They spell out what “still correct” means before you accept a large refactor or a generated patch.

The usual objection is that some code is a poor return on effort for hand-written unit tests—boilerplate, glue, or branches that are not where the product risk lives. Agentic tooling changes the trade-off: closing the last few percent is often cheap background work rather than a debate about whether it is worth it. This repo is also a proving ground for that style of development: tests sketch the contract, and agents can fill in coverage or routine paths without turning review into guesswork.

Something I want to explore further is how test-driven development compares with implementation-first work when AI agents are in the loop. TDD was pushed for years in “classical” development; the open question for me is whether the same advice still holds, or when it breaks down, once generation and refactors are cheap. I still have experiments I want to run in that space.

Process-wise, this release still landed as one large commit. On a greenfield project that is tolerable—commit history and review granularity matter less than when you are making small, careful edits on a long-lived codebase—but it is not a habit I want to keep. I plan to move toward smaller releases on a steadier cadence (about every two weeks), with more atomic commits, and we will see how that holds up.

I also switched from GitHub Copilot in IntelliJ IDEA to Cursor with a familiar editor layout. There is a real learning curve: instructions, project rules, and prompts steer the model in different directions, and learning to tune that is part of the job. For the next cycle I hope to close the last stretch toward full coverage, ship another screen in the UI, and keep moving toward an MVP—feature parity with the earlier Python + Qt version. There is still a long roadmap, but I am happy with how the project is coming together.